Word embedding

| Machine learning and data mining |

|---|

|

|

Machine learning venues

|

|

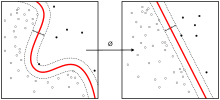

Word embedding is the collective name for a set of language modeling and feature learning techniques in natural language processing (NLP) where words or phrases from the vocabulary are mapped to vectors of real numbers. Conceptually it involves a mathematical embedding from a space with one dimension per word to a continuous vector space with much lower dimension.

Methods to generate this mapping include neural networks,[1] dimensionality reduction on the word co-occurrence matrix,[2][3][4] probabilistic models,[5] and explicit representation in terms of the context in which words appear.[6]

Word and phrase embeddings, when used as the underlying input representation, have been shown to boost the performance in NLP tasks such as syntactic parsing[7] and sentiment analysis.[8]

Development of technique

The word embedding technique began development in 2000. Bengio et al. provided in a series of papers the "Neural probabilistic language models" to reduce the high dimensionality of words representations in contexts by "learning a distributed representation for words". (Bengio et al, 2003).[9] Roweis and Saul published in Science how to use "locally linear embedding" (LLE) to discover representations of high dimensional data structure.[10] The area developed gradually and really took off after 2010, partly because important advances had been made since then on the quality of vectors and the training speed of the model.

There are many branches and many research groups working on word embeddings. In 2013, a team at Google led by Tomas Mikolov created word2vec, a word embedding toolkit which can train vector space models faster than the previous approaches.[11] Most of new word embedding techniques rely on a neural network architecture instead of more traditional n-gram models and unsupervised learning.[12]

For biological sequences: BioVectors

Word embeddings for n-grams in biological sequences (e.g. DNA, RNA, and Proteins) for bioinformatics applications have been proposed by Asgari and Mofrad.[13] Named bio-vectors (BioVec) to refer to biological sequences in general with protein-vectors (ProtVec) for proteins (amino-acid sequences) and gene-vectors (GeneVec) for gene sequences, this representation can be widely used in applications of deep learning in proteomics and genomics. The results presented by[13] suggest that BioVectors can characterize biological sequences in terms of biochemical and biophysical interpretations of the underlying patterns.

Thought vectors

Thought vectors are an extension of word embeddings to entire sentences or even documents. Some researches hope that these can improve the quality of machine translation.[14] [15]

Software

Software for training and using word embeddings includes Tomas Mikolov's Word2vec, Stanford University's GloVe[16] and Deeplearning4j. Principal Component Analysis (PCA) and T-Distributed Stochastic Neighbour Embedding (t-SNE) are both used to reduce the dimensionality of word vector spaces and visualize word embeddings and clusters.[17]

See also

References

- ↑ Mikolov, Tomas; Sutskever, Ilya; Chen, Kai; Corrado, Greg; Dean, Jeffrey (2013). "Distributed Representations of Words and Phrases and their Compositionality". arXiv:1310.4546

[cs.CL].

[cs.CL]. - ↑ Lebret, Rémi; Collobert, Ronan (2013). "Word Emdeddings through Hellinger PCA". arXiv:1312.5542

[cs.CL].

[cs.CL]. - ↑ Levy, Omer; Goldberg, Yoav (2014). Neural Word Embedding as Implicit Matrix Factorization (PDF). NIPS.

- ↑ Li, Yitan; Xu, Linli (2015). Word Embedding Revisited: A New Representation Learning and Explicit Matrix Factorization Perspective (PDF). Int'l J. Conf. on Artificial Intelligence (IJCAI).

- ↑ Globerson, Amir (2007). "Euclidean Embedding of Co-occurrence Data" (PDF). Journal of Machine learning research.

- ↑ Levy, Omer; Goldberg, Yoav (2014). Linguistic Regularities in Sparse and Explicit Word Representations (PDF). CoNLL. pp. 171–180.

- ↑ Socher, Richard; Bauer, John; Manning, Christopher; Ng, Andrew (2013). Parsing with compositional vector grammars (PDF). Proc. ACL Conf.

- ↑ Socher, Richard; Perelygin, Alex; Wu, Jean; Chuang, Jason; Manning, Chris; Ng, Andrew; Potts, Chris (2013). Recursive Deep Models for Semantic Compositionality Over a Sentiment Treebank (PDF). EMNLP.

- ↑ "A Neural Probabilistic Language Model". doi:10.1007/3-540-33486-6_6#page-1 (inactive 2016-07-13).

- ↑ Roweis, Sam T.; Saul, Lawrence K. (2000). "Nonlinear Dimensionality Reduction by Locally Linear Embedding". Science. 290 (5500): 2323. Bibcode:2000Sci...290.2323R. doi:10.1126/science.290.5500.2323. PMID 11125150.

- ↑ word2vec

- ↑ "A Scalable Hierarchical Distributed Language Model".

- 1 2 Asgari, Ehsaneddin; Mofrad, Mohammad R.K. (2015). "Continuous Distributed Representation of Biological Sequences for Deep Proteomics and Genomics". PloS one. 10 (11): e0141287. Bibcode:2015PLoSO..1041287A. doi:10.1371/journal.pone.0141287.

- ↑ Kiros, Ryan; Zhu, Yukun; Salakhutdinov, Ruslan; Zemel, Richard S.; Torralba, Antonio; Urtasun, Raquel; Fidler, Sanja (2015). "skip-thought vectors". arXiv:1506.06726

[cs.CL].

[cs.CL]. - ↑ "thoughtvectors".

- ↑ "GloVe".

- ↑ Ghassemi, Mohammad; Mark, Roger; Nemati, Shamim (2015). "A Visualization of Evolving Clinical Sentiment Using Vector Representations of Clinical Notes" (PDF). Computing in Cardiology.